IDRIS: GENERAL INTRODUCTION

This document is principally addressed to new users of IDRIS. In it we have provided information which is indispensable for using IDRIS resources.

1. Introduction to IDRIS

2. The IDRIS machine

3. Requesting allocations of hours on IDRIS machine

4. How to obtain an account at IDRIS

5. How to connect to an IDRIS machine

6. Management of your account and your environment variables

7. The disk spaces

8. Commands for file transfers

9. The module command

10. Compilation

11. Code execution

12. Training courses offered at IDRIS

13. IDRIS documentation

14. User support

For more complete documentation on the points addressed in this document, please consult the IDRIS site: www.idris.fr/eng/

1. Introduction to IDRIS

The Missions and Objectives of IDRIS

IDRIS (The Institute for Development and Resources in Intensive Scientific Computing), founded in 1993, is the national centre of the CNRS for intensive numerical calculations of very high performance computing (HPC) and for Artificial Intelligence (AI). It serves the scientific research communities which rely on extreme computing, both public and private (on condition of open research with publication of results).

At the same time a centre of computing resources and a pole of competence in HPC and AI, IDRIS (www.idris.fr) is a research support unit of the CNRS under the auspices of the CNRS Open Research Data Department (DDOR). It is administratively attached to the Institute for Computing Sciences (INS2I) but has a multidisciplinary vocation within the CNRS. Its operational modalities are similar to those of the IR* (“Star” Research Infrastructures) of the Ministère de l'Enseignement supérieur et de la Recherche (MESR).

IDRIS is currently directed by M. Pierre-François Lavallée.

Scientific management of Resources

Allocating computing hours for the three national computing centres (CINES, IDRIS and TGCC), is organized under the coordination of GENCI (Grand Équipement National de Calcul Intensif).

Requests for resources are made through eDARI portal, the common web site for the three computing centres.

Requests may be submitted for calculation hours for new projects, to renew an existing project or to request supplementary hours. These hours are valuable for one year.

Depending on the number of requested hours, the file will be considered as a regular access file (accés régukier) or a dynamique access file (accès dynamique)

- Regular access: the request computing resources are possible at any time during the year but the evaluation of the dossier is semi-annual in May and November. The proposals are examined from a scientific perspective by the Thematic Committees members who draw on the technical expertise of the centres application assistance teams as needed. Subsequently, an evaluation committee meets to decide upon the resource requests and make approval recommendations to the Attribution Committee, under the authority of GENCI, for distributing computing hours to the three national centres.

- Dynamique access: The requests are validated by the IDRIS director who evaluats scientific and technic quality of the proposal and eventualy ask for the advice from a scientific thematic comittee expert.

In both case, IDRIS management studies the requests for supplementary resources as needed (“demandes au fil de l'eau”) and attributes limited hours in order to avoid the blockage of on-going projects.

For more information, you can consult Requesting resource hours on IDRIS machine web page.

You also have access to a short video about resources allocation and account opening on Jean Zay on our YouTube channel "Un œil sur l'IDRIS" (it is in French but automatic subtitles work quite properly):

The IDRIS User Committee

The role of the User Committee is to be a liaison for dialogue with IDRIS so that all the projects which received allocations of computer resources can be successfully conducted in the best possible conditions. The committee transmits the observations of all the users regarding the functioning of the centre and the issues are discussed with IDRIS in order to determine the appropriate changes to be made.

Ideally, the User Committee consists of 2 members elected from each scientific discipline who can be contacted at the following address: The User Committee pages are available to IDRIS users by connecting to the IDRIS Extranet, section: Comité des utilisateurs.

In this space are found the reports on IDRIS machine exploitation as well as the latest meeting minutes.

Working Group for Single FSD Requests

A working group is in place since two years. It includes the head of the PPST department (Protection du Potentiel Scientifique et Technique) of the FSD (Fonctionnaire Sécurité Défense), three people from IDRIS including the director, and user representatives:

- Marie-Bernadette.Lepetit@neel.cnrs.fr ; Tel 04.76.88.90.45

- Alain.Miniussi@oca.eu Tel : +33 4 92 00 30 09 / +33 4 83 61 85 44

For years, users have been requesting that only one FSD authorization request be required for all three national computing centers and that the response be valid for all three centers.

This request has been accepted in principle for a long time, but is still only partially implemented. This group is working to have it implemented as quickly as possible. We invite anyone for whom this does not work (automatic propagation without having to resubmit requests) to contact the chair of the User Committee (Marie-Bernadette.Lepetit@neel.cnrs.fr ; Tel 04.76.88.90.45).

The members of the User Committee participating in the working group will also take on the role of PPST correspondents:

- Advice on FSD request procedures

- Answering common user questions

- Relaying issues encountered with FSD instances

- Providing users with information on the procedures to be accepted into data centers.

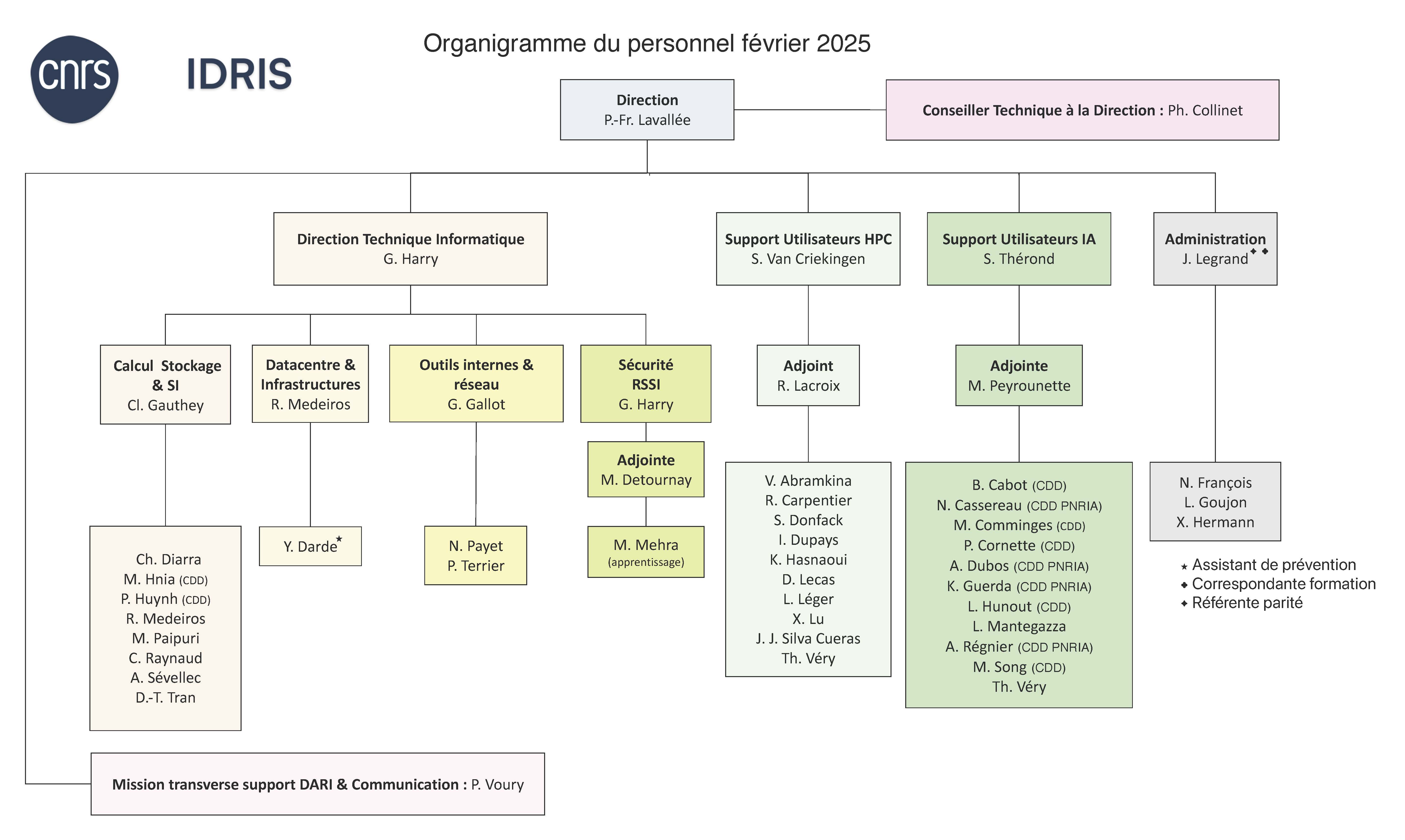

IDRIS personnel

2. The IDRIS machine

Jean Zay: Eviden BullSequana XH3000, HPE SGI 8600 supercomputer

Jean Zay is a supercomputer having an Eviden BullSequana XH3000 part and a HPE SGI 8600 part forming a total of five partitions: one partition containing scalar nodes (having only CPUs), and four partitions containing accelerated nodes (hybrid nodes equipped with both CPUs and GPUs). All the HPE SGI 8600 compute nodes are interconnected by an Intel Omni-PAth network (OPA) and all the Eviden BullSequana XH3000 compute nodes are interconnected by an Infiniband network. All nodes access a parallel file system with very high bandwidth.

Following three successive extensions, the cumulated peak performance of Jean Zay reached 125.9 Pflop/s starting in July 2024.

Access to the various hardware partitions of the machine depends on the type of job submitted (CPU or GPU) and the Slurm partition requested for its execution (see the details of the Slurm CPU partitions and the Slurm GPU partitions).

Scalar partition (or CPU partition)

Without specifying a CPU partition, or with the cpu_p1 partition, you will have access to the following resources:

- 720 scalar compute nodes with:

- 2 Intel Xeon Gold 6248 processors (20 cores at 2.5 GHz), or 40 cores per node

- 192 GB of memory per node

Note: Following the decommissioning of 808 CPU nodes on 5 February 2024, this partition went from 1528 nodes to 720 nodes.

Accelerated partitions (or GPU partitions)

Without indicating a GPU partition, or with the v100-16g or v100-32g constraint, you will have access to the following resources:

- 396 four-GPU accelerated compute nodes with:

- 2 Intel Xeon Gold 6248 processors (20 cores at 2.5 GHz), or 40 cores per node

- 192 GB of memory per node

- 126 nodes with 4 Nvidia Tesla V100 SXM2 16GB GPUs (with v100-16g)

- 270 nodes with 4 Nvidia Tesla V100 SXM2 32GB GPUs (with v100-32g)

Note: Following the decommissioning of 220 4-GPU V100 16 GB nodes (v100-16g) on 5 February 2024, this partition went from 616 nodes to 396 nodes.

With the gpu_p2, gpu_p2s or gpu_p2l partitions, you will have access to the following resources:

- 31 eight-GPU accelerated compute nodes with:

- 2 Intel Xeon Gold 6226 processors (12 cores at 2.7 GHz), or 24 cores per node

- 20 nodes with 384 GB of memory (with gpu_p2 or gpu_p2s)

- 11 nodes with 768 GB of memory (with gpu_p2 or gpu_p2l)

- 8 Nvidia Tesla V100 SXM2 32 GB GPUs

With the gpu_p5 partition only accessible with A100 GPU hours (extension of June 2022), you will have access to the following resources:

- 52 eight-GPU accelerated compute nodes with:

- 2 AMD Milan EPYC 7543 processors (32 cores at 2.80 GHz), or 64 cores per node

- 512 GB of memory per node

- 8 Nvidia A100 SXM4 80 GB GPUs

With the gpu_p6 partition only accessible with H100 GPU hours (extension of summer 2024), you will have access to the following resources:

- 364 four-GPU accelerated compute nodes with:

- 2 Intel Xeon Platinum 8468 processors (48 cores at 2,10 GHz) or 96 cores per node

- 512 GB of memory per node

- 4 Nvidia H100 SXM5 80 GB GPUs

Pre- and post-processing

With the prepost partition, you will have access to the following resources:

- 4 pre- and post-processing large memory nodes with:

- 4 Intel Skylake 6132 processors (12 cores at 3.2 GHz), or 48 cores per node

- 3 TB of memory per node

- 1 Nvidia Tesla V100 GPU

- An internal NVMe 1.5 TB disk

Visualization

With the visu partition, you will have access to the following resources:

- 5 scalar-type visualization nodes with:

- 2 Intel Cascade Lake 6248 processors (20 cores at 2.5 GHz), or 40 cores per node

- 192 GB of memory per node

- 1 Nvidia Quadro P6000 GPU

Compilation

With the compil partition, you will have access to the following resources:

- 4 pre- and post-processing large memory nodes (see above)

- 3 compilation nodes with:

- 1 Intel(R) Xeon(R) Silver 4114 processor (10 cores at 2.20 GHz)

- 96 GB of memory per node

A compil_h100 partition is also available, providing access to a processor identical to those in the Eviden H100 partition (gpu_p6):

- 1 compilation node

- 1 Intel(R) Xeon(R) Platinum 8468 processor (48 cores at 2.10 GHz)

- 256 GB of memory per node

Archiving

With the archive partition, you will have access to the following resources:

- 4 pre- and post-processing nodes (see above)

Additional characteristics

- Cumulated peak performance of 126 PFlop/s (since 5 February 2024)

- 100 Gb/s Intel Omni-PAth interconnection network : 1 link per scalar node and 4 links per (four-GPU or eight-GPU) V100 converged node and (eight-GPU) A100 converged node

- 400 Gb/s NDR InfiniBand interconnection network : 4 links per (four-GPU) H100 converged node

- Lustre parallel file system

- Parallel storage device with a capacity of 2.5 PB SSD disks (GridScaler GS18K SSD) following the 2020 summer extension.

- Parallel storage device with disks having more than 30 PB capacity

- 5 frontal nodes

- 2 Intel Cascade Lake 6248 processors (20 cores at 2.5 GHz), or 40 cores per node

- 192 GB of memory per node

3. Requesting allocations of hours on IDRIS machine

Requesting computing hours at IDRIS

Requesting resource hours on Jean Zay is done via the eDARI portal www.edari.fr, common to the three national computing centres: CINES, IDRIS and TGCC.

Before requesting any hours, we recommend that you consult the GENCI document (in French) detailing the conditions and eligibility criteria for obtaining computing hours.

Whichever the usage (AI or HPC), you may make a request at any time via a single form on the eDARI eDARI portal. Dynamic Access (AD) is for resources ≤ 50 kh normalized GPU hours (1 A100 hour = 2 V100 hours = 2 normalized GPU hours) / 500 kh CPU hours. Regular Access is for resources larger than these values. Important : Your request for resources is accumulative for the three national centres. Your file will be Dynamic Access or Regular Access, depending on the number of hours you request.

From your personal space (eDARI account) on the eDARI portal, you can:

- Create a Dynamic or Regular access file.

- Renew a Dynamic or Regular Access file.

- Request the opening of a compute account, necessary to access the computing resources on Jean Zay. Consult the IDRIS document on Account management.

You may access an explanatory video about requesting hours and opening an account on Jean Zay on our YouTube channel "Un œil sur l'IDRIS" (It is in French but subtitles in other languages are available.):

Dynamic Access (AD)

Requests for resources for Dynamic Access files may be made throughout the year and are renewable. The requests receive an expert assessment and are validated by the IDRIS director. The hours allocation is valid for one year beginning at the opening of the computing account on Jean Zay.

Regular Access (AR)

Two project calls for Regular Access are launched each year:

- In January-February for an hours allocation from 1 May of the same year until 30 April of the following year.

- In June-July for an hours allocation from 1 November of the current year until 31 October of the following year.

New Regular access files can be submitted throughout the year. They will receive an expert assessment during the biannual project call campaign whose closing date most immediately follows validation of the file by the project manager.

Renewal requests for Regular Access files, however, can only be submitted during a campaign before its closure date and will receive an expert assessment at that time.

As information, the closure date limit of the next calls is visible on the DARI Web site (first column on the left).

Requests for supplementary hours ("au fil de l'eau")

Throughout the entire year you may request additional resources (demandes au fil de l'eau) on the eDARI portal www.edari.fr for all existing projects (Dynamic Access or HPC Regular Access) which have used up their initial hours quotas during the year. The accumulated hours request for Dynamic Access files must remain inferior to the thresholds of 50 000 normalized GPU hours or 500 000 CPU hours.

Documentation

Two documentation resources are available:

- IDRIS documentation to assist you in completing each of these formalities via the eDARI portal.

- The GENCI document (in French, detailing the procedures for access to the national resources).

4. How to obtain an account at IDRIS

Account management: Account opening and closure

User accounts

Each user has a unique account which can be associated with all the projects in which the user participates.

For more information, you may consult our web page regarding multi-project management.

Managing your account is done through completing an IDRIS form which must be sent to .

Of particular note, the FGC form is used to make modifications for an existing account: Add/delete machine access, change a postal address, telephone number, employer, etc.

An account can only exist as “open” or “closed”:

- Open. In this case, it is possible to:

- Submit jobs on the compute machine if the project's current hours allocation has not been exhausted (cf. idracct command output).

- Submit pre- and post-processing jobs.

- Closed. In this case, the user can no longer connect to the machine. An e-mail notification is sent to the project manager and the user at the time of account closure.

Opening a user account

For a new project

There is no automatic or implicit account opening. Each user of a project must request one of the following:

- If the user does not yet have an IDRIS account, the opening of an account respects the access procedures (note GENCI), for regular access as well as dynamic access, use the eDARI portal after the concerned project has obtained computing hours.

- if the user already has an account opened at IDRIS, the linking of his account to the project concerned via the eDARI portal. This request must be signed by both the user and the manager of the added project.

IMPORTANT INFORMATION: By decision of the IDRIS director or the CNRS Defence and Security Officer (FSD), the creation of a new account may be submitted for ministerial authorisation in application of the French regulations for the Protection du Potentiel Scientifique et Technique de la Nation (PPST). In this event, a personal communication will be transmitted to the user so that implementation of the required procedure may be started, knowing that this authorisation procedure may take up to two months. To anticipate this, you can send an email directly to the concerned centre ( see the contacts on the eDARI portal) to initiate the procedure in advance (for yourself or for someone you wish to integrate soon into your group) even before the account opening request is sent for validation to the director of the research structure and to the security manager of the research structure.

Comment: The opening of a new account on the machine will not be effective until (1) the access request (regular, dynamic, or preparatory) is validated (with ministerial authorization if requested) and (2) the concerned project has obtained computing hours.

You have access to a short video about resources allocation and account opening on Jean Zay on our YouTube channel "Un œil sur l'IDRIS" (it is in French but automatic subtitles work quite properly):

For a project renewal

Existing accounts are automatically carried over from one project call to another if the eligibility conditions of the project members have not changed (cf. GENCI explanatory document for project calls Modalités d'accès). If your account is open and already associated with the project which has obtained hours for the following call, no action on your part is necessary.

Closure of a user account

Account closure of an unrenewed project

When a GENCI project is not renewed, the following procedure is applied:

- On the date of computing hours accounting expiry:

- Unused DARI hours are no longer available and the project users can no longer submit jobs on the compute machine for this project (no more hours accounting for the project).

- The project accounts remain open and linked to the project so that data can be accessed for a period of six months.

- Six months after the date of hours accounting expiry:

- Project accounts are detached from the project (no more access to project data).

- All the project data (SCRATCH, STORE, WORK, ALL_CCFRSCRATCH, ALL_CCFRSTORE and ALL_CCFRWORK) will be deleted at the initiative of IDRIS within an undefined time period.

- All the project accounts which are still linked to another project will remain open but, if the non-renewed project was their default project, they must change it via the idrproj command (otherwise the variables (SCRATCH, STORE, WORK, ALL_CCFRSCRATCH, ALL_CCFRSTORE and ALL_CCFRWORK will not be defined).

- All the project accounts which are no longer linked to any project can be closed at any time.

File recovery is the responsibility of each user during the six months following the end of an unrenewed project, by transferring files to a user's local laboratory machine or to the Jean Zay disk spaces of another DARI project for multi-project accounts.

With this six-month delay period, we avoid premature closing of project accounts: This is the case for a project of allocation Ai which was not renewed for the following year (no computing hours requested for allocation Ai+2) but was renewed for allocation Ai+3 (which begins six months after allocation Ai+2).

Account closure after expiry of ministerial authorisation for accessing IDRIS computer resources

Ministerial authorisation is only valid for a certain period of time. When the ministerial authorisation reaches its expiry date, we are obligated to close your account.

In this situation, the procedure is as follows:

- A first email notification is sent to the user 90 days before the expiry date.

- A second email notification is sent to the user 70 days before the expiry date.

- The account is closed on the expiry date if the authorisation has not been renewed. .

Important: to avoid this account closure, upon receipt of the first email, the user is invited to submit a new request for an account opening via eDARI portal so that IDRIS can start examining of a prolongation dossier. Indeed, the instruction of the dossier can take up to two months .

Account closure for security reasons

An account may be closed at any moment and without notice by decision of the IDRIS management.

Account closure following a detaching request made by the project manager

The project manager can request the detaching of an account attached to his project by completing and sending us the form (FGC)

During this request, the project manager may request that the data of the detached account and contained in the SCRATCH, STORE, WORK, ALL_CCFRSCRATCH, ALL_CCFRSTORE and ALL_CCFRWORK directories be immediately deleted or copied into the directories of another user attached to the same project.

But following the detachment, if the detached account is no longer attached to any project, it can then be closed at any time.

Declaring the machines from which a user connects to IDRIS

Each machine from which a user wishes to access an IDRIS computer must be registered at IDRIS.

The user must provide, for each of his/her accounts, a list of machines which will be used to connect to the IDRIS computers (the machine's name and IP address). This is done at the creation of each account viathe eDARI portal.

The user must update the list of machines associated with a login account (adding/deleting) by using the FGC form (account administration form). After completing this form, it must be signed by both the user and the security manager of the laboratory.

Important note: Personal IP addresses are not authorised for connection to IDRIS machines.

Security manager of the laboratory

The laboratory security manager is the network/security intermediary for IDRIS. This person must guarantee that the machine from which the user connects to IDRIS conforms to the most recent rules and practices concerning information security and must be able to immediately close the user access to IDRIS in case of a security alert.

The security manager's name and contact information are transmitted to IDRIS by the laboratory director on the FGC (account administration) form. This form is also used for informing IDRIS of any change in the security manager.

How to access IDRIS while teleworking or on mission

For security reasons, we cannot authorise access to IDRIS machines from non-institutional IP addresses. For example, you cannot have direct access from your personal connection.

Using a VPN

The recommended solution for accessing IDRIS resources when you are away from your registered address (teleworking, on mission, etc.) is to use the VPN (Virtual Private Network) of your laboratory/institute/university. A VPN allows you to access distant resources as if you were directly connected to the local network of your laboratory. Nevertheless, you still need to register the VPN-attributed IP address of your machine to IDRIS by following the procedure described above. This solution has the advantage of allowing the usage of IDRIS services which are accessible via a web navigator (for example, the extranet or products such as Jupyter Notebook, JupyterLab and TensorBoard).

Using a proxy machine

If using a VPN is impossible, it is always possible to connect via SSH to a proxy machine of your laboratory from which Jean Zay is accessible (which implies having registered the IP address of this proxy machine).

you@portable_computer:~$ ssh proxy_login@proxy_machine proxy_login@proxy_machine~$ ssh idris_login@idris_machine

Note that it is possible to automate the proxy via the SSH options ProxyJump or ProxyCommand to be able to connect by using only one command (for example, ssh -J proxy_login@proxy_machine idris_login@idris_machine).

Obtaining temporary access to IDRIS machines from a foreign country

The user on mission must request machine authorisation by completing the corresponding box on page 3 of the FGC form. A temporary ssh access to all the IDRIS machines is then accorded.

5. How to connect to an IDRIS machine

How do I access Jean Zay ?

Each IDRIS user is the holder of a unique login for all the projects in which he/she participates. This account is personal and should not be shared. This login is associated with a password which is subject to certain security rules. Before connecting, we advise you to consult the page management and problems of passwords.

You can only connect on Jean Zay from a machine whose IP address is registered in our filters. If this is not the case, consult the procedure for declaring machines which is available on our Web site.

Interactive access to Jean Zay is possible on the front-end nodes of the machine via the ssh protocol or using our JupyterHub instance (warning: you will need to connect at least once using ssh to set your password).

For more detailed information, you may consult the description of the hardware and software of the cluster.

Jean Zay: Access and shells

Access to the machines

Jean Zay:

Connection to the Jean Zay front end is done by ssh from a machine registered at IDRIS:

$ ssh my_idris_login@jean-zay.idris.fr

followed by entering your password if you have not configured the ssh key.

Jean Zay pre- and post-processing:

Interactive connection to the pre-/post-processing front end is done by ssh from a machine registered at IDRIS:

$ ssh my_idris_login@jean-zay-pp.idris.fr

followed by entering your password if you have not configured the ssh key.

Connection by SSH key

Connections using a pair of SSH keys (private key / public key) are possible on Jean Zay.

ATTENTION: We plan to strengthen our security policy regarding access to the jean-zay machine. Therefore, we ask you to test, as of now, the usage of certificates for your SSH connections instead of the usual SSH key pairs (private key / public key) by following the procedures detailed here.

SSH connection with certificate

With the objective of reinforcing the security when accessing Jean Zay, we ask you to test the usage of certificates for your SSH connections instead of the usual SSH keys (private key/public key). The creation and usage of certificates are done by respecting the procedures detailed here.

During the test phase, connections via classic SSH keys will remain possible. Please let us know of any problems you may encounter with the usage of certificates.

Managing the environment

Your $HOME space is common to all the Jean-Zay front ends. Consequently, every modification of your personal environment files is automatically applied on all the machines.

What shells are available on the IDRIS machines?

The Bourne Again shell (bash) is the only command-line interpreter supported as login shell on the IDRIS machines: IDRIS does not guarantee that the user environment by default will be correctly defined with the other shells. The bash is an important evolution of the Bourne shell (formerly sh) with advanced functionalities. However, other command-line interpreters (ksh, tcsh, csh) are also installed on the machines to allow the execution of scripts using these shells.

Which environment files are invoked during the launching of a login session in bash?

The .bash_profile, if it exists in your HOME, is executed at the login only one time per session. If not, it is the .profile file which is executed, if it exists. It's in one of these files that we place the environment variables, the programs to be launched at the connection. The definition of the aliases, the personal functions and the module loading are to be placed in the .bashrc file which is run at the launching of each sub-shell.

It is preferable to use only one environment file: .bash_profile or .profile.

Passwords

Connecting to Jean Zay is done with the user login and the associated password.

During the first connection, the user must indicate the “initial password” and then immediately change it to an “actual password”.

The initial password

What is the initial password?

The initial password is the result of the concatenation of two passwords respecting the order:

- The first part consists of a randomly generated IDRIS password which is sent to you by e-mail during the account opening and during a reinitialisation of your password. It remains valid for 20 days.

- The second part consists of the user-chosen password (8 characters) which you provided during your first account opening request using eDARI portal (if you are a new user) or when requesting a change in your initial password (using the FGC form).

Note: For a user with a previously opened login account created in 2014 or before, the password indicated in the last postal letter from IDRIS should be used.

The initial password must be changed within 20 days following transmission of the randomly generated password (see below the section "Using an initial password at the first connection").

If this first connexion is not done within the 20-day timeframe, the initial password is invalidated and an e-mail is sent to inform you. In this case, you just have to send an e-mail to

to request a new randomly generated password which is then sent to you by e-mail.

An initial password is generated (or re-generated) in the following cases:

- Account opening (or reopening): an initial password is formed at the creation of each account and also for the reopening of a closed account.

- Loss of the actual password:

- If you have lost your actual password, you must contact to request the re-generation of a randomly generated password which is then sent to you by e-mail. You will also need to have the user-chosen part of the password you previously provided in the FGC form.

- If you have also lost the user-chosen part of the password which you previously provided in the FGC form (or was contained in the postal letter from IDRIS in the former procedure of 2014 or before), you must complete the “Request to change the user part of initial password” section of the FGC form, print and sign it, then scan and e-mail it to or send it to IDRIS by postal mail. You will then receive an e-mail containing a new randomly generated password.

Using an initial password at the first connection

Below is an example of the first connection (without using ssh keys) for which the “initial password” be required for the login_idris account on IDRIS machine.

Important: At the first connection, the initial password is requested twice. A first time to establish the connection on the machine and a second time by the password change procedure which is then automatically executed.

Recommendation : As you have to change the initial password the first time you log in, before beginning the procedure, carefully prepare another password which you will enter (see Creation rules for "actual passwords" in section below).

$ ssh login_idris@machine_idris.idris.fr login_idris@machine_idris password: ## Enter INITIAL PASSWORD first time ## Last login: Fri Nov 28 10:20:22 2014 from machine_idris.idris.fr WARNING: Your password has expired. You must change your password now and login again! Changing password for user login_idris. Enter login( ) password: ## Enter INITIAL PASSWORD second time ## Enter new password: ## Enter new chosen password ## Retype new password: ## Confirm new chosen password ## password information changed for login_idris passwd: all authentication tokens updated successfully. Connection to machine_idris closed.

Remark : You will be immediately disconnected after entering a new correct chosen password (“all authentication tokens updated successfully”).

Now, you may re-connect using your new actual password that you have just registered.

The actual password

Once your actual password has been created and entered correctly, it will remain valid for one year (365 days).

How to change your actual password

You can change your password at any time by using the UNIX command passwd directly on front end. The change is taken into account immediately for all the machines. This new actual password will remain valid for one year (365 days) following its creation.

Creation rules for "actual passwords"

- It must contain a minimum of 12 characters.

- The characters must belong to at least 3 of the 4 following groups:

- Uppercase letters

- Lowercase letters

- Numbers

- Special characters

- The same character may not be repeated more than 2 times consecutively.

- A password must not be composed of words from dictionaries or from trivial combinations (1234, azerty, …).

Notes:

- Your actual password is not modifiable on the same day as its creation or for the 5 days following its creation. Nevertheless, if necessary, you may contact the User Support Team to request a new randomly generated password for the re-creation of an initial password.

- A record is kept of the last 6 passwords used. Reusing one of the last 6 passwords will be rejected.

Forgotten or expired password

If you have forgotten your password or, despite the warning e-mails sent to you, you have not changed your actual password before its expiry date (i.e. one year after its last creation), your password will be invalidated.

In this case, you must contact to request the re-generation of the randomly generated password which is then sent to you by e-mail.

Note: You will also need to have the user-chosen part of the initial password you initially provided, to be able to connect on the host after this re-generation. In fact, you will have to follow the same procedure than for using an initial password at the first connection.

Account blockage following 15 unsuccessful connection attempts

If your account has been blocked as a result of 15 unsuccessful connection attempts, you must contact the IDRIS User Support Team.

Account security reminder

You must never write out your password in an e-mail, even ones sent to IDRIS (User Support, Gestutil, etc.) no matter what the reason: We would be obligated to immediately generate a new initial password, the objective being to inhibit the actual password which you published and to ensure that you define a new one during your next connection.

Each account is strictly personal. Discovery of account access by an unauthorised person will cause immediate protective measures to be taken by IDRIS including the eventual blockage of the account.

The user must take certain basic common sense precautions:

- Inform IDRIS immediately of any attempted trespassing on your account.

- Respect the recommendations for using SSH keys.

- Protect your files by limiting UNIX access rights.

- Do not use a password which is too simple.

- Protect your personal work station.

6. Management of your account and your environment variables

How do I modify my personal data?

Modification of your personal data is done via the Web interface Extranet.

- For those who do not have a password for Extranet or who have lost it, the access modalities are described on this page.

- For those who have a password, click on Extranet, connect with your identifiers, then ⇒ Your account ⇒ Your data ⇒ Your contact details.

The only data modifiable on line are:

- e-mail address

- telephone number

- fax number

Modification of your postal address is done by completing the section « Modification of the user's postal address », on the Administration Form for Login Accounts FGC, and sending it to from an institutional address. Note that this procedure requires the signatures of the user and of your laboratory director.

What disk spaces are available on Jean Zay ?

For each project, there are 5 distinct disk spaces available on Jean Zay: HOME, WORK, SCRATCH/JOBSCRATCH, STORE and DSDIR.

You will find the explanations concerning these spaces on the disk spaces page of our Web site.

Important: HOME, WORK and STORE are subject to quotas !

If your login is attached to more than one project, the IDRIS command idrenv will display all the environment variables referencing all the disk spaces of your projects. These variables allow you to access the data of your different projects from any of your other projects.

Choose your storage space according to your needs (permanent, semi-permanent or temporary data, large or small files, etc.).

How do I request an extension of a disk space or inodes?

If your use of the disk space is in accordance with its usage recommendations and if you cannot delete or move the data contents from this space, your project manager can make a justified request for a quota increase (space and/or inodes) via the extranet.

How can I know the number of computing hours consumed per project?

You simply need to use the IDRIS command idracct to know the hours consumed by each collaborator of the project as well as the total number of hours consumed and the percentage of the allocation.

Note that the information returned by this command is updated once per day (the date and time of the update are indicated in the first line of the command output).

If you have more than one project at IDRIS, this command will display the CPU and/or GPU consumptions of all the projects that your login is attached to.

What should I do when I soon will have no computing hours remaining?

There are two possible ways to request supplementary hours :

- Either by requesting more resources as needed («au fil de l'eau») ; this request may be made as soon as your AD or AR project has an hours allocation in progress.

- Or by requesting a complement of hours for a period of six months ; this can be requested midway through your Regular Access allocation.

These requests must be justified and should be made via the eDARI portal as indicated on our Web page requesting resource hours.

How can I know when the machine is unavailable?

The machine can be unavailable because of a planned maintenance event or due to a technical problem which occurred unexpectedly. In both cases, the information is available on the homepage of the IDRIS Web site via the drop-down menu entitled, « For users », then the heading "Machine availability".

IDRIS users may also subscribe to the “info-machines” mailing list through the Extranet.

How do I recover files which I unintentionally deleted?

Due to the migration to new Lustre parallel file systems, there is no backup of the WORK space anymore. We recommend that you keep a backup of your important files as archive files stored in your STORE disk space.

Because their sizes are too large, neither the SCRATCH (semi-permanent space), nor the STORE (archiving space) are backed up.

Can I ask IDRIS to transfer files from one account to another account?

IDRIS considers data to be linked to a project. Consequently, for the transfer to be possible, the following are necessary:

- Both accounts (the owner and the recipient) must be attached to the same project.

- The project manager makes the request by signed fax or by e-mail to the support team () specifying:

- The concerned machine.

- The source account and the recipient account.

- The list of files and/or directories to transfer.

Can I recover files on an external support ?

It is no longer possible to request the transfer of files to an external support.

7. The disk spaces

Jean Zay: The disk spaces

For each project, four distinct disk spaces are accessible: HOME, WORK, SCRATCH/JOBSCRATCH and the STORE.

Each space has specific characteristics adapted to its usage which are described below. The paths to access these spaces are stocked in five variables of the shell environment: $HOME, $WORK, $SCRATCH, $JOBSCRATCH and $STORE.

You can know the occupation of the different disk spaces by using the IDRIS “idr_quota_user/idr_quota_project” commands or with the Unix du (disk usage) command. The return of the idr_quota_user and idr_quota_project commands is immediate but is not real time information (the data are updated once a day). The du command returns information in real time but its execution can take a long time, depending on the size of the concerned directory.

For database management specific to Jean Zay, a dedicated page was written as a complement to this one: Database management.

The HOME

$HOME : This is the home directory during an interactive connection. This space is intended for small-sized files which are frequently used such as the shell environment files, tools, and potentially the sources and libraries if they have a reasonable size. The size of this space is limited (in space and in number of files).

The HOME characteristics are:

- A permanent space.

- Intended to receive small-sized files.

- For a multi-project login, the HOME is unique.

- Submitted to quotas per user which are intentionally rather low (3 GiB by default).

- Accessible in interactive or in a batch job via the

$HOMEvariable :$ cd $HOME

- It is the home directory during an interactive connection.

Note: The HOME space is also referenced via the CCFRHOME environment variable to respect a common nomenclature with the other national computing centers (CINES, TGCC).

$ cd $CCFRHOME

The WORK

$WORK: This is a permanent work and storage space which is usable in batch. In this space, we generally store large-sized files for use during batch executions: very large source files, libraries, data files, executable files, result files and submission scripts.

The characteristics of WORK are:

- A permanent space.

- Intended to receive large-sized files: maximum size is 10 TiB per file.

- In the case of a multi-project login, a WORK is created for each project.

- Submitted to quotas per project.

- Accessible in interactive or in a batch job.

- It is composed of 2 sections:

- A section in which each user has their own part, accessed by the command:

$ cd $WORK

- A section common to the project to which the user belongs and into which files can be placed to be shared, accessed by the command:

$ cd $ALL_CCFRWORK

- The

WORKis a disk space with a bandwidth of about 100 GB/s in read and in write. This bandwidth can be temporarily saturated if there is exceptionally intensive usage.

Note: The WORK space is also referenced via the CCFRWORK environment variable in order to respect a common nomenclature with other national computing centers (CINES, TGCC):

$ cd $CCFRWORK

Usage recommendations

- Batch jobs can run in the WORK. However, because several of your jobs can be run at the same time, you must manage the unique identities of your execution directories or your file names.

- In addition, this disk space is submitted to quotas (per project) which can stop your execution suddenly if the quotas are reached. Therefore, you must not only be aware of your own activity in the WORK but that of your project colleagues as well. For these reasons, you may prefer using the SCRATCH or the JOBSCRATCH for the execution of batch jobs.

The SCRATCH/JOBSCRATCH

$SCRATCH: This is a semi-permanent work and storage space which is usable in batch; the lifespan of the files is limited to 30 days. The large-sized files used during batch executions are generally stored here: the data files, result files or the computation restarts. Once the post-processing has been done to reduce the data volume, you must remember to copy the significant files into the WORK so that they are not lost after 30 days, or into the STORE for long-term archiving.

The characteristics of the SCRATCH are:

- The SCRATCH is a semi-permanent space with a 30-day file lifespan.

- Not backed up.

- Intended to receive large-sized files: maximum size is 10 TiB per file.

- Submitted to very large security quotas:

- Disk quotas per project of about 1/10th of the total disk space for each group

- Inode quotas per project on the order of 150 million files and directories.

- Accessible in interactive or in a batch job.

- Composed of 2 sections:

- A section in which each user has their own part, accessed by the command:

$ cd $SCRATCH

- A section common to the project to which the user belongs into which files can be placed to be shared. It is accessed by the command:

$ cd $ALL_CCFRSCRATCH

- In the case of a multi-project login, a SCRATCH is created for each project.

- The SCRATCH is a disk space with a bandwidth of about 500 GB/s in write and in read.

Note: The SCRATCH space is also referenced via the CCFRSCRATCH environment variable in order to respect a common nomenclature with other national computing centers (CINES, TGCC):

$ cd $CCFRSCRATCH

$JOBSCRATCH: This is the temporary execution directory specific to batch jobs.

Its characteristics are:

- A temporary directory with file lifespan equivalent to the batch job lifespan.

- Not backed up.

- Intended to receive large-sized files: maximum size is 10 TiB per file.

- Submitted to very large security quotas:

- disk quotas per projectof about 1/10th of the total disk space for each group

- Inode quotas per project on the order of 150 million files and directories.

- Created automatically when a batch job starts and, therefore, is unique to each job.

- Destroyed automatically at the end of the job. Therefore, it is necessary to manually copy the important files onto another disk space (the WORK or the SCRATCH) before the end of the job.

- The JOBSCRATCH is a disk space with a bandwidth of about 500 GB/s in write and in read.

- During the execution of a batch job, the corresponding JOBSCRATCH is accessible from the Jean Zay front end via its JOBID job number (see the output of the squeue command), your logname (environment variable LOGNAME) and the following command:

$ cd /lustre/fsn1/jobscratch_hpe/$LOGNAME_JOBID

Usage recommendations:

- The JOBSCRATCH can be seen as the former TMPDIR.

- The SCRATCH can be seen as a semi-permanent WORK which offers the maximum input/output performance available at IDRIS but limited by a 30-day lifespan for files.

- The semi-permanent characteristics of the SCRATCH allow storing large volumes of data there between two or more jobs which run successively within a limited period of a few weeks: This disk space is not purged after each job.

The STORE

$STORE: This is the IDRIS archiving space for long-term storage. Very large files are generally stored here after post-processing by regrouping the calculation result files in a tar file. This is a space which is not intended to be accessed or modified on a daily basis but to preserve very large volumes of data over time with only occasional consultation.

Important change: Since 22 July 2024, the STORE is only accessible from the login nodes and the prepost, archive, compil and visu partitions. Jobs running on the compute nodes will not be able to access this space directly but you may use multi-steps jobs to automate the data transfers to/from the STORE (see our examples of multi-steps jobs using the STORE).

Its characteristics are:

- The STORE is a permanent space.

- The STORE is not accessible from the compute nodes but only from the login nodes and the prepost, archive, compil and visu partitions (you may use multi-step jobs to automate the data transfers to/from the STORE; see our examples of multi-steps jobs using the STORE).

- Intended to receive very large-sized files: The maximum size is 10 TiB per file and the minimum recommended size is 250 MiB (ratio disc size/ number of inodes).

- In the case of a multi-project login, a STORE is created per project.

- Submitted to quotas per project with a small number of inodes, but a very large space.

- Composed of 2 sections:

- A section in which each user has their own part, accessed by the command:

$ cd $STORE

- A section common to the project to which the user belongs and into which files can be placed to be shared. It is accessed by the command:

$ cd $ALL_CCFRSTORE

Note: The STORE space is also referenced via the CCFRSTORE environment variable in order to respect a common nomenclature with other national computing centers (CINES, TGCC):

$ cd $CCFRSTORE

Usage recommendations:

- However, there is no longer a limitation on file lifespan.

- As this is an archive space, it is not intended for frequent access.

The DSDIR

$DSDIR: This storage space is dedicated to voluminous public data bases (in size or number of files) which are needed for using AI tools. These databases are visible to all Jean Zay users.

If you use large public databases which are not found in the $DSDIR space, IDRIS will download and install them in this disk space at your request.

The list of currently available databases is found on this page: Jean Zay: Datasets and models available in the $DSDIR storage space.

If your database is personal or under a license which is too restrictive, you must take charge of its management yourself in the disk spaces of your project, as described on the Database Management page.

Summary table of the main disk spaces

| Space | Default capacity | Features | Usage |

|---|---|---|---|

$HOME |

3GB and 150k inodes per user |

- Home directory at connection |

- Storage of configuration files and small files |

$WORK |

5TB (*) and 500k inodes per project |

- Storage on rotating disks (350GB/s in read et 300GB/s in write) |

- Storage of sources and input/output data - Execution in batch or interactive |

$SCRATCH |

Very large security quotas, 4.6PB shared by all users | - SSD Storage (1,5TB/s in read et 1,1TB/s in write) - Lifespan of unused files: 30 days (unused = not read or modified) - Space not backed up |

- Storage of voluminous input/output data - Execution in batch or interactive - Optimal performance for read/write operations |

$STORE |

50TB (*) and 100k inodes (*) per project |

- Disk cache and magnetic tapes - Long accesses if file only on tape. |

- Long-term archive storage (for lifespan of project) - No access from compute nodes |

The snapshots

WARNING: Due to the migration to new Lustre parallel file systems, there is no backup of the WORK space anymore. We recommend that you keep a backup of your important files as archive files stored in your STORE disk space.

Jean Zay: Disk quotas and commands for visualizing occupation rates

Introduction

Quotas guarantee equitable access to disk resources. They prevent one group of users from consuming all the space which thereby hinders other groups from working. At IDRIS, quotas limit both the quantity of disk space and the number of files (inodes). These limits are applied per user for the HOME (one HOME per user even if your login is attached to more than one project) and per project for the WORK and the STORE (as many WORK and STORE spaces as there are projects for the same user).

You can consult the occupation of your disk spaces by using the two commands presented on this page:

idr_quota_userto view your personal usage as a useridr_quota_projectto view your whole project and the consumption of each of the members

The idrquota command is no longer available. It was the first quota visualisation command which was deployed on Jean Zay. The current idr_quota_user and idr_quota_project commands are an evolution of this.

Exceeding the quotas

When a user or project has exceeded a quota, no warning e-mail is sent. Nevertheless, you are informed by error messages such as « Disk quota exceeded » when you manipulate files in the concerned disk space.

When one of the quotas is attained, you can no longer create files in the concerned disk space. Doing this could disturb jobs being executed at that time if they were launched from this space.

Warning: Editing a file after you have reached your disk quota limit can cause the file size to return to zero, thereby deleting its contents.

When you are blocked or in the process of being blocked:

- Try cleaning out the concerned disk space by deleting the files which have become obsolete.

- Archive the directories which you no longer, or rarely, access.

- Move your files into another space in function of their usages (see the page on disk spaces).

- The project manager or deputy may request a quota increase for the STORE space via the Extranet interface.

Comments:

- You need to remember to check the common disk spaces,

$ALL_CCFRWORKand$ALL_CCFRSTORE. - A recurrent cause of exceeding quotas is the use of personal conda environments. Please refer to the Python personal environment page to understand the best practices on Jean Zay.

The idr_quota_user command

By default, the idr_quota_user command returns your personal user occupation for all of the disk spaces of your active project. For example, if your active project is abc, you will see an output similar to the following:

$ idr_quota_user HOME INODE: |██-------------------------------| U: 9419/150000 6.28% STORAGE: |████████████████████████████████-| U: 2.98 GiB/3.00 GiB 99.31% ABC STORE INODE: |---------------------------------| U: 1/100000 0.00% G: 12/100000 0.01% STORAGE: |---------------------------------| U: 4.00 KiB/50.00 TiB 0.00% G: 48.00 KiB/50.00 TiB 0.00% ABC WORK INODE: |███▒▒▒---------------------------| U: 50000/500000 10.00% G: 100000/500000 20.00% STORAGE: |██████████▒▒▒▒▒▒▒▒▒▒-------------| U: 1.25 TiB/5.00 TiB 25.00% G: 2.5 TiB/5.00 TiB 50.00% The quotas are refreshed daily. All the information is not in real time and may not reflect your real storage occupation.

In this output example, your personal occupation is represented by the black bar and quantified on the right after the letter U (for User).

Your personal occupation is also compared to the global occupation of the project which is represented by the grey bar (in this output example) and quantified after the letter G (for Group).

Note that the colours can be different depending on the parameters and/or type of your terminal.

You can refine the information returned by the idr_quota_user command by adding one or more of the following arguments:

--project defto display the occupation of a different project than your active project (defin this example)--all-projectsto display the occupation of all the projects to which you are attached--space home workto display the occupation of one or more disk spaces in particular (the HOME and the WORK in this example)

Complete help for the idr_quota_user command is accessible by launching:

$ idr_quota_user -h

The idr_quota_project command

By default, the idr_quota_project command returns the disk occupation of each member of your active project for all of the disk spaces associated with the project. For example, if your active project is abc, you will see an output similar to the following:

$ idr_quota_project PROJECT: abc SPACE: WORK PROJECT USED INODE: 34373/500000 6.87% PROJECT USED STORAGE: 1.42 GiB/5.00 TiB 0.03% ┌─────────────────┬─────────────────┬─────────────────┬─────────────────┬──────────────────────┐ │ LOGIN │ INODE ▽ │ INODE % │ STORAGE │ STORAGE % │ ├─────────────────┼─────────────────┼─────────────────┼─────────────────┼──────────────────────┤ │ abc001 │ 29852│ 5.97%│ 698.45 MiB│ 0.01%│ │ abc002 │ 4508│ 0.90%│ 747.03 MiB│ 0.01%│ │ abc003 │ 8│ 0.00%│ 6.19 MiB│ 0.00%│ │ abc004 │ 1│ 0.00%│ 0.00 B│ 0.00%│ │ abc005 │ 1│ 0.00%│ 0.00 B│ 0.00%│ └─────────────────┴─────────────────┴─────────────────┴─────────────────┴──────────────────────┘ PROJECT: abc SPACE: STORE PROJECT USED INODE: 13/100000 0.01% PROJECT USED STORAGE: 52.00 KiB/50.00 TiB 0.00% ┌─────────────────┬─────────────────┬─────────────────┬─────────────────┬──────────────────────┐ │ LOGIN │ INODE ▽ │ INODE % │ STORAGE │ STORAGE % │ ├─────────────────┼─────────────────┼─────────────────┼─────────────────┼──────────────────────┤ │ abc001 │ 2│ 0.00%│ 8.00 KiB│ 0.00%│ │ abc002 │ 2│ 0.00%│ 8.00 KiB│ 0.00%│ │ abc003 │ 2│ 0.00%│ 8.00 KiB│ 0.00%│ │ abc004 │ 2│ 0.00%│ 8.00 KiB│ 0.00%│ │ abc005 │ 1│ 0.00%│ 4.00 KiB│ 0.00%│ └─────────────────┴─────────────────┴─────────────────┴─────────────────┴──────────────────────┘ The quotas are refreshed daily. All the information is not in real time and may not reflect your real storage occupation.

A summary of the global occupation is displayed for each disk space, followed by a table with the occupation details of each member of the project.

You can refine the information returned by the idr_quota_project command by adding one or more of the following arguments:

--project defto display the occupation of a different project than your active project (defin this example)--space workto display the occupation of one (or more) particular disk space(s) (the WORK in this example)--order storageto display the values of a given column in decreasing order (the STORAGE column in this example)

Complete help for the idr_quota_project command is accessible by launching:

$ idr_quota_project -h

General comments

- The projects to which you are attached correspond to the UNIX groups listed by the

idrprojcommand. - The quotas are not monitored in real time and may not represent the actual occupation of your disk spaces. The

idr_quota_userandidr_quota_projectcommands are updated each morning. - To know the volumetry in octets and inodes in real time of a given directory (

my_directory), you can execute the commands:du -hd0 my_directoryanddu -hd0 --inodes my_directory, respectively. Contrary to the “idr_quota” commands, theducommands can have a long execution time in relation to the directory size. - For the WORK and the STORE, the displayed occupation rates include both the personal space (

$WORKor$STORE) and the occupation of the common space ($ALL_CCFRWORKor$ALL_CCFRSTORE).

8. Commands for file transfers

File transfers using the bbftp command

To transfer large-sized files from IDRIS to your laboratory, we advise you to use BBFTP which is an optimised software for transferring files.

All the information for using the bbftp command is found on our website.

File transfers via CCFR network

How do I transfer data between the 3 national centres (CCFR network) ?

Introduction

The CCFR (Centres de Calcul Français) network is dedicated to very high speed and interconnects the three French national computing centres: CINES, IDRIS and TGCC. This network is made available to users to facilitate data transfers between the national centres. The machines currently connected on this network are Joliot-Curie at TGCC, Jean Zay at IDRIS, and Adastra at CINES.

Using this network requires that you have logins (different for each center) in at least two of the three centres and that they are authorized to access the CCFR network in the concerned centres.

Comments:

- For your IDRIS login, the request for access to the CCFR network can be made:

- when you request to create an account from eDARI portal,

- or by sending an e-mail to from your address known to IDRIS with the title « CCFR: IDRIS login / your name ». This information will be transmitted to the two other centers so that they can make your access operational.

- Moreover, not all of the Jean Zay nodes are connected to this network. To use it from IDRIS, you can use the front-end nodes

jean-zay.idris.frandjean-zay-pp.idris.fr.

For more information, please contact the User Support Team ().

Data transfers via CCFR network

Data transfers between the machines of the centres via the CCFR network constitute the principal service of this network. A command wrapper ccfr_cp accessible via a modulefile is provided to simplify the usages:

$ module load ccfr

This ccfr_cp command automatically recuperates the connection information of the specified machine (name domain, port number) and detects the authentication possibilities. By default, the command will opt for basic authentication, using the traditional methods in force on the targeted machine.

The ccfr_cp command is based on the rsync tool and configured to use the SSH protocol for transfers. The copy is recursive and keeps the symbolic links, the access rights and the dates of file modifications.

The command details and the list of the machines accessible on the CCFR network are available by specifying the -h option to the ccfr_cp command.

For transfers from jean-zay to CINES and TGCC machines, you can use commands similar to theses:

$ module load ccfr $ ccfr_cp /path/to/datas/on/jean-zay login_cines@adastra:/path/to/directory/on/adastra: $ ccfr_cp /path/to/datas/on/jean-zay login_tgcc@irene:/path/to/directory/on/irene:

For transfers from Adastra, the procedure is similar except that you must use the machine adastra-ccfr.cines.fr (accessible from adastra.cines.fr) as shown on CINES documentation.

For transfers from Joliot-Curie, the procedure is also similar and can be carried out directly from the front-end nodes irene-fr.ccc.cea.fr. After connecting to the machine, the machine.info command will give you all the useful information.

A ccfr_sync command, variant of ccfr_cp, enables a strong synchronisation between the source and the destination by adding, compared to the ccfr_cp command, the deletion of the destination files which are no longer present in the source. The -h option is also available for this command.

Remark: These commands will use a basic authentication with password in compliance with the terms and conditions in force at the remote centre (CINES or TGCC). You will therefore certainly be required to provide a password each time. To avoid this, you can use IDRIS transfer-only certificates (valid for 7 days) whose instructions for use are defined on the IDRIS website. Using such certificates will force you to initiate transfers from the remote machine adastra-ccfr.cines.fr (accessible from adastra.cines.fr) for CINES and irene-fr.ccc.cea.fr for TGCC after having copied the transfer-only certificate on the remote machine and to build the rsync transfer commands yourself (so do not use the ccfr_cp and ccfr_sync wrappers). You can then draw inspiration from the following examples to make your transfers:

# Simple copy from jean-zay to remote machine (initiated on remote machine) # using transfert-only certificate registered in ~/.ssh/id_ecc_rsync on remote machine $ rsync --human-readable --recursive --links --perms --times --omit-dir-times -v \ -e 'ssh -i ~/.ssh/id_ecc_rsync' \ login_idris@jean-zay-ccfr.idris.fr:/path/on/jean-zay /path/on/adastra/or/irene # Strong synchronization (--delete option) from jean-zay to remote machine (initiated on remote machine) # using transfert-only certificate registered in ~/.ssh/id_ecc_rsync on remote machine $ rsync --human-readable --recursive --links --perms --times --omit-dir-times -v --delete \ -e 'ssh -i ~/.ssh/id_ecc_rsync' \ login_idris@jean-zay-ccfr.idris.fr:/path/on/jean-zay /path/on/adastra/or/irene

Attention : On adastra-ccfr.cines.fr, the id_ecc_rsync certificate must be visible from your directory /home/login_cines/.ssh so that the ssh command can use it (no environment variable is defined for this disk space). You must therefore take care to unarchive the certificate in this directory with a command like:

login_cines@adastra-ccfr.cines.fr:~$ unzip ~/transfert_certif.zip -d /home/login_cines/.ssh Archive: /lus/home/.../transfert_certif.zip inflating: /home/login_cines/.ssh/id_ecc_rsync inflating: /home/login_cines/.ssh/id_ecc_rsync.pub

Data transfers via parallel-sftp

To speed up file transfers between three national centers, you can also use parallel-sftp command. It's a tool developed by CEA which can use standard sftp command in parallel to make parallel file transfers.

You can find more information on CEA web site.

parallel-sftp is installed on login nodes of IDRIS jean-zay cluster. Its usage is identical as sftp, except the option -n which let you choose the number of ssh connections used for the parallel transfers.

For example, to make a parallel transfer with 5 ssh connections:

$ parallel-sftp -n 5 <remote_login>@<remote_host>

Thus, if one sftp transfer is limited at 1Gbps for example, this transfer will use at most 5Gbps.

Warning: for your transfers between national centers, you must use the nodes connected to the CCFR network. For IDRIS, this means that the <remote_host> parameter above must be adastra-ccfr.cines.fr or irene-fr-ccfr.ccc.cea.fr. But you can directly use jean-zay.idris.fr to execute the parallel-sftp command.

For use in IDRIS, you can follow the following example written for adastra (for irene you have just to use irene-fr-ccfr.ccc.cea.fr):

# 1/ Connect to jean-zay: $ ssh jean-zay_login@jean-zay.idris.fr # 2/ Initiate connection which allows transfers with the adastra machine using # potentially 5 threads in this example $ parallel-sftp -n 5 adastra_login@adastra-ccfr.cines.fr sftp> # You are then in a sftp environment in which you can execute # put and get commands to make the transfers (see man sftp).. # WARNING: environment variables such as WORK, SCRATCH, STORE, ... # are not defined and therefore cannot be used to # define the paths to the data to be transferred. # You must indicate the full paths! # 3/ Make transfers # To transfer a directory from jean-zay to adastra, you can do: sftp> put -r /path/to/jean-zay/src/directory /path/to/adastra/dest/directory # To transfer a directory from adastra to jean-zay, you can do: sftp> get -r /path/to/jean-zay/dest/directory /path/to/adastra/src/directory

9. The module command

For more information, consult our web page about instructions to use the module command on Jean Zay.

10. Compilation

Jean Zay: The Fortran and C/C++ compilation system (Intel)

$ module avail intel-compilers ----------------------- /gpfslocalsup/pub/module-rh/modulefiles -------------------------- intel-compilers/16.0.4 intel-compilers/18.0.5 intel-compilers/19.0.2 intel-compilers/19.0.4 $ module load intel-compilers/19.0.4 $ module list Currently Loaded Modulefiles: 1) intel-compilers/19.0.4

$ ifort prog.f90 -o prog $ icc prog.c -o prog $ icpc prog.C -o prog

Jean Zay: Compilation of an MPI parallel code in Fortran, C/C++

- Intel MPI :

$ module avail intel-mpi -------------------------------------------------------------------------- /gpfslocalsup/pub/module-rh/modulefiles -------------------------------------------------------------------------- intel-mpi/5.1.3(16.0.4) intel-mpi/2018.5(18.0.5) intel-mpi/2019.4(19.0.4) intel-mpi/2019.6 intel-mpi/2019.8 intel-mpi/2018.1(18.0.1) intel-mpi/2019.2(19.0.2) intel-mpi/2019.5(19.0.5) intel-mpi/2019.7 intel-mpi/2019.9 $ module load intel-compilers/19.0.4 intel-mpi/19.0.4

- Open MPI (without CUDA-aware MPI, you must choose one of the modules with no

-cudaextension) :

$ module avail openmpi -------------------------------------------------------- /gpfslocalsup/pub/modules-idris-env4/modulefiles/linux-rhel8-skylake_avx512 -------------------------------------------------------- openmpi/3.1.4 openmpi/3.1.5 openmpi/3.1.6-cuda openmpi/4.0.2 openmpi/4.0.4 openmpi/4.0.5 openmpi/4.1.0 openmpi/4.1.1 openmpi/3.1.4-cuda openmpi/3.1.6 openmpi/4.0.1-cuda openmpi/4.0.2-cuda openmpi/4.0.4-cuda openmpi/4.0.5-cuda openmpi/4.1.0-cuda openmpi/4.1.1-cuda $ module load pgi/20.4 openmpi/4.0.4

- Intel MPI :

$ mpiifort source.f90 $ mpiicc source.c $ mpiicpc source.C

- Open MPI :

$ mpifort source.f90 $ mpicc source.c $ mpic++ source.C

Jean Zay: Compilation of an OpenMP parallel code in Fortran, C/C++

$ ifort -qopenmp source.f90 $ icc -qopenmp source.c $ icpc -qopenmp source.C

$ ifort -c -qopenmp source1.f $ ifort -c source2.f $ icc -c source3.c $ ifort -qopenmp source1.o source2.o source3.o

Jean Zay: Using the NVIDIA/PGI compilation system for C/C++ and Fortran

$ module avail pgi nvidia-compilers --------------------- /gpfslocalsup/pub/module-rh/modulefiles --------------------- pgi/19.10 pgi/20.1 pgi/20.4 --------------------- /gpfslocalsup/pub/module-rh/modulefiles --------------------- nvidia-compilers/20.7 nvidia-compilers/21.7 nvidia-compilers/23.9 nvidia-compilers/20.9 nvidia-compilers/21.9 nvidia-compilers/23.11 nvidia-compilers/20.11 nvidia-compilers/22.5 nvidia-compilers/24.3 nvidia-compilers/21.3 nvidia-compilers/22.9 nvidia-compilers/21.5 nvidia-compilers/23.1 $ module load nvidia-compilers/24.3 $ module list Currently Loaded Modulefiles: 1) nvidia-compilers/24.3

$ nvc prog.c -o prog $ nvc++ prog.cpp -o prog $ nvfortran prog.f90 -o prog

Jean Zay : Compiling an OpenACC code

The PGI compiling options for activating OpenACC are the following:

-acc: This option activates the OpenACC support. You can specify some suboptions:[no]autopar: Activate automatic parallelization for the ACC PARALLEL directive. The default is to activate it.[no]routineseq: Compile all the routines for the accelerator. The default is to not compile each routine as a sequential directive.strict: Display warning messages if using non-OpenACC directives for the accelerator.verystrict: Stops the compilation if using any non-OpenACC directives for the accelerator.sync: Ignore async clauses.[no]wait: Wait for the completion of each calculation kernel on the accelerator. By default, kernel launching is blocked except if async is used.- Example :

$ pgfortran -acc=noautopar,sync -o prog_ACC prog_ACC.f90

-ta: This option activates offloading on the accelerator. It automatically activates the-accoption.- It will be useful for choosing the architecture for compiling the code.

- To use the V100 GPUs of Jean Zay, it is necessary to use the

teslasuboption of-taand thecc70compute capability. For example:$ pgfortran -ta=tesla:cc70 -o prog_gpu prog_gpu.f90

- Some useful

teslasuboptions:managed: Creates a shared view of the GPU and CPU memory.pinned: Activates CPU memory pinning. This can improve data transfer performance.autocompare: Activates comparison of CPU/GPU results.

Jean Zay: CUDA-aware MPI and GPUDirect

For optimal performance, the Cuda-aware OpenMPI libraries supporting the GPUDirect are available on Jean Zay.

$ module avail openmpi/*-cuda -------------- /gpfslocalsup/pub/modules-idris-env4/modulefiles/linux-rhel8-skylake_avx512 -------------- openmpi/3.1.4-cuda openmpi/3.1.6-cuda openmpi/4.0.2-cuda openmpi/4.0.4-cuda $ module load openmpi/4.0.4-cuda

$ mpifort source.f90 $ mpicc source.c $ mpic++ source.C

Since no particular option is necessary for the compilation, you may refer to the GPU compilation section of the index for more information on code compiling using the GPUs.

Adaptation of the code

The utilisation of the CUDA-aware MPI GPUDirect functionality on Jean Zay requires an accurate initialisation order for CUDA or OpenACC and MPI in the code :

- Initialisation of CUDA or OpenACC

- Choice of the GPU which each MPI process should use (binding step)

- Initialisation of MPI.

Caution: if this initialisation order is not respected, your code execution might crash with the following error:

CUDA failure: cuCtxGetDevice()

11. Code execution

Interactive and batch

There are two possible job modes: Interactive and batch.

In both cases, you must respect the maximum limits in elapsed (or clock) time, memory, and number of processors and/or number of GPUs which are set by IDRIS with the goal of better managing the computing resources. You will find more complete information concerning these limits by consulting the following pages on our Web server: CPU Slurm partitions, GPU Slurm partitions and the pages detailing how to reserve memory for CPU jobs or for GPU jobs.

Interactive jobs

From the machines declared in the IDRIS filters, you have SSH access to the front ends from which you can:

- Manage your files (creating, copying, archiving, saving, compiling, …) and your disk quotas in the different disk spaces.

- Manage your account (change a password. manage a multi-project login).

- Monitor the hours consumption of your project(s).

- Submit and assure the monitoring of your calculations on the compute nodes via the Slurm software.

- Connect in SSH to compute nodes which are assigned to one of your jobs in order to monitor the execution of your calculations.

- Execute your codes on the compute nodes via the

sruncommand or thesalloccommand (allocation/reservation of resources) or, alternatively, via an interactive shell executed on a compute node. For more information, consult our documentation concerning interactive execution of CPU codes and interactive execution of GPU codes.

Comment: Any code requiring GPUs cannot be executed on the front ends as they are not equipped with them.

Batch jobs

There are several reasons to work in batch mode:

- Having the possibility of closing the interactive session after submitting a batch job.

- Having the possibility of going beyond the limitations of interactive in elapsed (or clock) time, memory, number of processors or GPUs.

- Doing the computations with dedicated resources (these resources are reserved for you alone).

- Allowing for better resource management for users by distribution on the machine according to the resources requested.

- Launching your pre-/post-processing jobs on nodes dedicated to large memory (jean-zay-pp).

At IDRIS, we use Slurm software for batch job management on the compute nodes, the pre-/post-processing nodes (jean-zay-pp) and the visualisation nodes (jean-zay-visu).

This batch manager controls the scheduling of jobs according to resources requested (memory, elapsed (or clock) time, number of CPUs, number of GPUs, …), the number of active jobs at a given moment (number in total and number per user) and the number of hours consumed per project.

There are 2 essential steps in order to work in batch: job creation and job submission.

Job creation

This step consists of writing all the commands that you want executed into a file and then adding, at the beginning of the file, the Slurm submission directives for defining certain parameters such as:

- Job name (directive

#SBATCH --job-name=...) - Elapsed time limit for the entire job (directive

#SBATCH --time=HH:MM:SS) - Number of compute nodes (directive

#SBATCH --nodes=...) - Number of (MPI) processes per compute node (directive

#SBATCH --ntasks-per-node=...) - Total number of (MPI) processes (directive

#SBATCH --ntasks=...) - Number of OpenMP threads per process (directive

#SBATCH --cpus-per-task=...) - Number of GPUs for jobs using GPUs (directive

#SBATCH --gres=gpu:...)

Once the submission directives have been defined, it is recommended to enter the commands in the following order:

- Go into the execution directory under WORK, SCRATCH or JOBSCRATCH (for more information, see our documentation about the disk spaces).

- Copy the entry files necessary to the execution into this directory.

- Launch the execution (via the

sruncommand for the MPI, hybrid or multi-GPU codes). - If you have used the SCRATCH or the JOBSCRATCH, you should copy the result files which you wish to save.

Comments :

- With the Slurm directive

#SBATCH –cpus-per-task=..., which sets the number of threads per process, you may also set the quantity of memory available per process. For more information, please consult our documentation on the memory allocation of a CPU job and/or of a GPU job. - Detailed examples of jobs are available on our Web site in the sections entitled “Execution/commands of a CPU code” and “Execution/commands of a GPU code”.

Job submission

To submit a batch job (here, Slurm script mon_job), you must use the following command :

$ sbatch mon_job

Your job will be placed in a partition according to the values requested in the Slurm directives. We advise you to set the parameters concerning the number of CPUs/GPUs, and the elapsed time, as accurately as possible in order to have a job return as rapidly as possible.

Comments :

- For monitoring and managing your batch jobs, you should use the Slurm commands.

- In batch mode, the user cannot intervene during the execution of commands except to stop/kill the job. Consequently, file transfers must be done without using/requiring a password.

- The compute nodes have no access to the Internet which prevents all downloading (Git repositories, Python/Conda installation, …) from these nodes. If needed, these downloads can be done from the front ends or from the pre-/post-processing nodes, either before the code execution or via the submission of cascade jobs.

- If you want to execute a job from the pre-/post-processing machine (jean-zay-pp), you must use the Slurm directive shown below in the submission script:

#SBATCH --partition=prepost

If this submission directive is absent, the job will execute in the default partition, thus on the compute nodes.

For any problem, please contact the IDRIS User Support Team.

12. Training courses offered at IDRIS

IDRIS training courses

IDRIS provides training courses for its own users as well as to others who use scientific computing. Most of these courses are included in the CNRS continuing education catalogue CNRS Formation Entreprises which makes them accessible to all users of scientific calculation in both the academic and industrial sectors.